In physics, the classical 'Hall effect,' discovered in the late 19th century, describes how a transverse voltage is generated when an electric current is exposed to a perpendicular magnetic field.

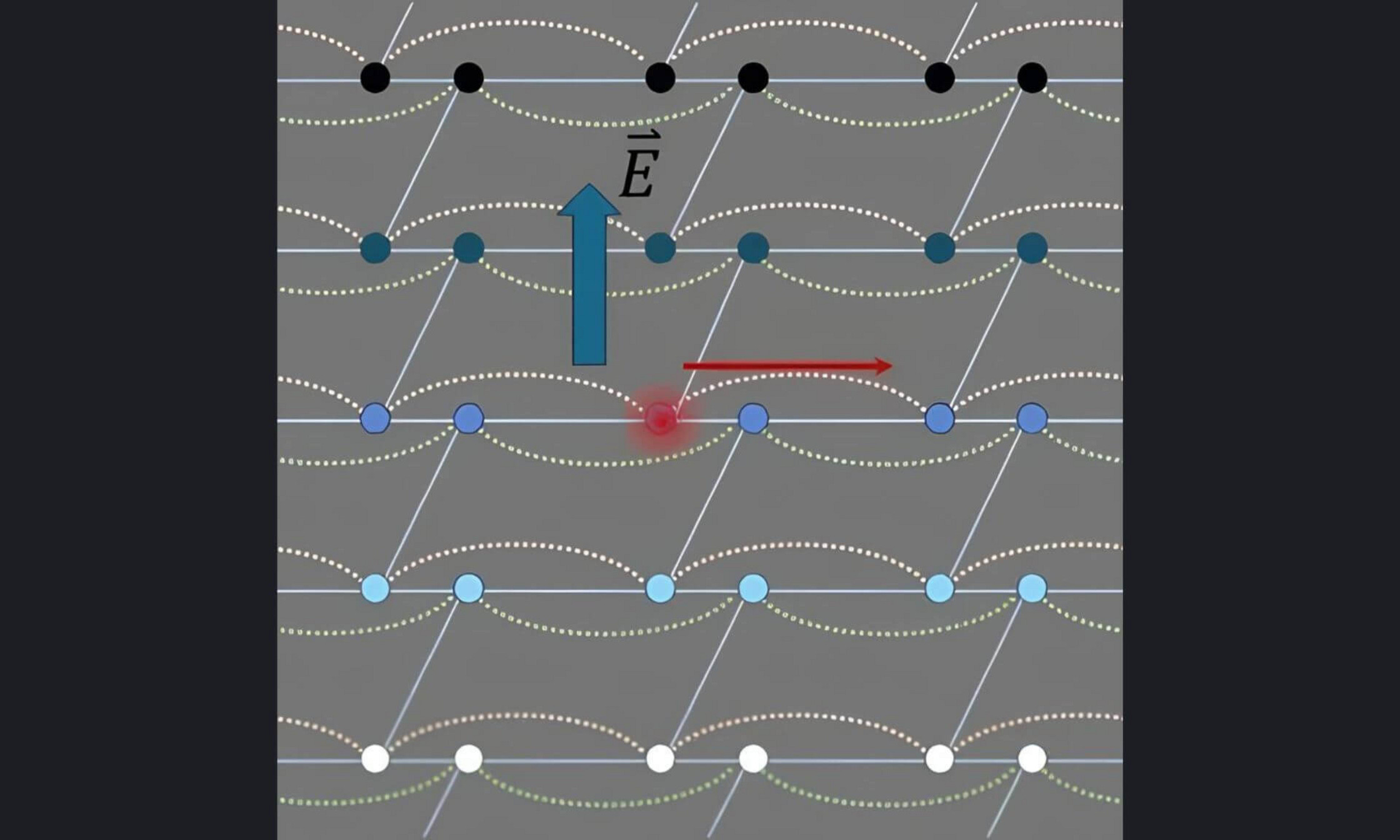

Simply put, the magnetic field causes the electrons, which are negatively charged, to drift sideways, creating a negative charge on one edge of the conducting strip and a positive charge on the opposite side.

For decades, this voltage difference has been used as a diagnostic tool to measure magnetic fields with precision and characterize material doping levels, that is, the addition of a tiny, controlled amount of impurity to a pure material to change how it conducts electricity.

In the 1980s, experiments at ultra-low temperatures with ultra-thin conductors—imagine a sheet of paper—revealed that under intense magnetic fields, this voltage difference increases not in a straight line but in perfectly defined steps.

These plateaus are universal, independent of the material’s composition, shape or microscopic defects. They rely solely on fundamental constants of the universe: the electron charge and the Planck constant.

This is known as the quantum Hall effect, a discovery so important it led to three Nobel Prizes in Physics: in 1985, for the discovery of the quantum Hall effect, in 1998 for the discovery of the fractional quantum Hall effect, and in 2016 for the discovery of topological phases of matter.

Until now, the quantum Hall effect had mainly been observed with electrons, whose electric charge makes them sensitive to electric and magnetic fields. Photons, the particles of light, are electrically neutral and thus immune to these forces.

Replicating the quantum Hall effect with light, therefore, posed a major challenge.